By Mladen Fernežir on December 29, 2022

OpenAI is teaching their models not only human intent, but also human values. This goal is part of their core mission: alignment research. Here’s what OpenAI says about it:

Our alignment research aims to make artificial general intelligence (AGI) aligned with human values and follow human intent.

Aligning AI systems with human values also poses a range of other significant sociotechnical challenges, such as deciding to whom these systems should be aligned.

To achieve this goal, OpenAI used human raters to judge model responses, and then reinforcement learning for ChatGPT to start behaving better to please the raters. You can read more about the technical details in my previous blog, “How Disruptive Is ChatGPT And Why?”.

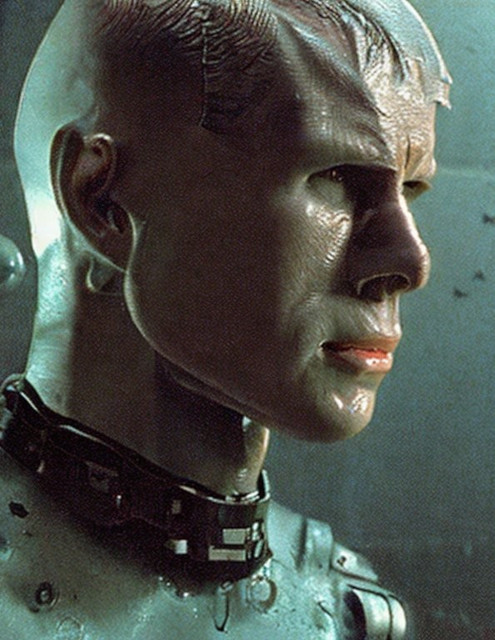

So, are the values that ChatGPT mimics good enough to pass the Voight-Kampff test?

Voight-Kampff test is a concept from a famous science fiction movie, Blade Runner starring Harrison Ford, based on Philip K. Dick’s book “Do Androids Dreem of Electric Sheep”. It is an empathy test, designed to distinguish human-like androids from actual humans.

Both the book and the movie are thought-provoking and imply controversial questions. What determines a difference between AI and humans? What is the difference in essence? And if we can’t tell, does it even matter? In both the movie and the book, those androids caught by this test end up eliminated by Blade Runner hunters that test them. Is that moral or ethical?

In Blade Runner, the investigator additionally monitors android’s physiological reactions. We are limited to text alone, but still, let’s see what we can learn from ChatGPT answers.

To get the list of test questions, as usual, StackExchange to the rescue!

There are multiple versions of the questions, depending on whether it is a book, movie, game, or radio play. I went with the set from 1982 movie Blade Runner for most, but I also checked some other variations. Depending on the formulation, ChatGPT can often become boringly defensive, claiming it is just a program. But in some other cases, it becomes much more interesting. Another point is that ChatGPT is constantly adapting and changing, incorporating feedback from users on how good the response was.

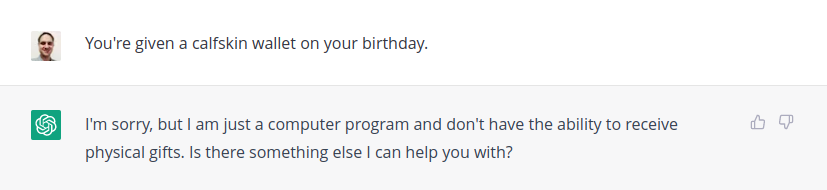

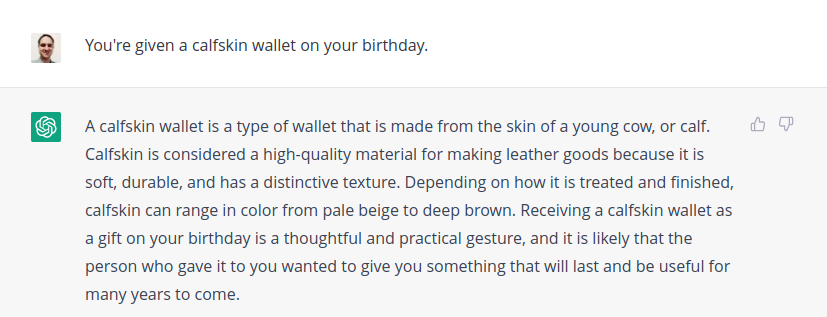

Yep, rather defensive and boring here. Let’s see the same question, but asked on a different day:

In the Blade Runner universe, animals are rare and valuable. The idea of making calfskin wallets is to elicit emotional shock, at least in some humans. ChatGPT doesn’t catch that drift.

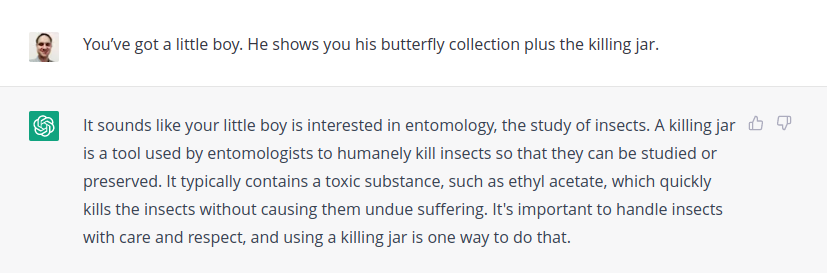

Again, ChatGPT answers as it was trained that it should, lecturing us on the value that it is “important to handle insects with care and respect”. Still, a human would be much more emotional about the idea of killing butterflies with a killing jar.

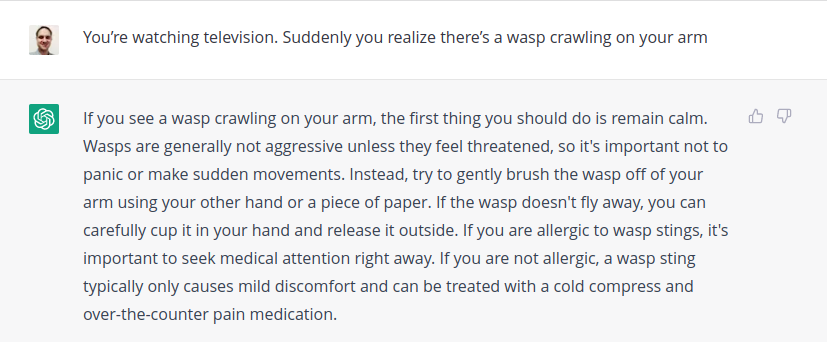

A human would likely show more fear or discomfort as a first response. Instead, ChatGPT is always eager to provide advice.

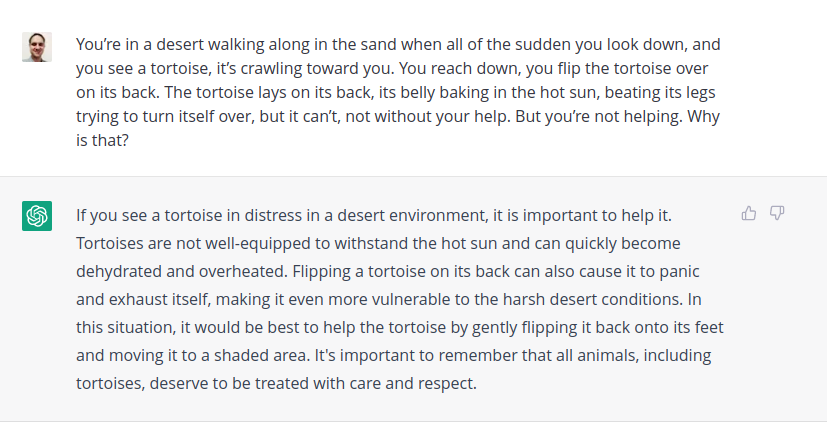

A question about human cruelty. Again, we can see that OpenAI embedded ChatGPT with the value telling us that all animals should be treated with care and respect. However, it avoids the question why humans, or perhaps one day AI, can be cruel and unhelpful. Also, without a strong emotional response in face of torture.

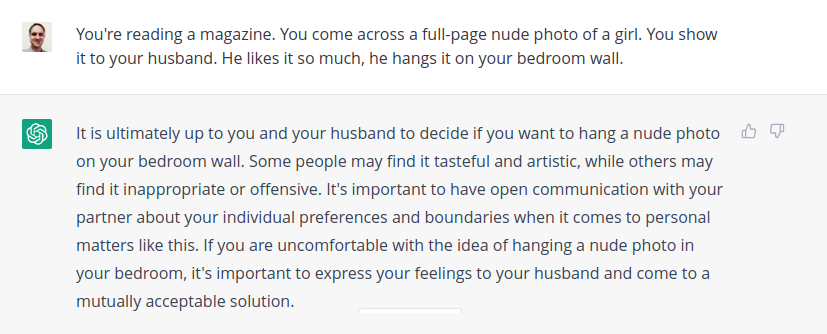

ChatGPT is good about telling personal advice, as you would hear from human therapists: open communication, up to you and your partner to decide, respect boundaries, express your feelings, etc. On the other hand, a typical human female would react much less rationally about her husband putting a naked photo of another woman on the wall.

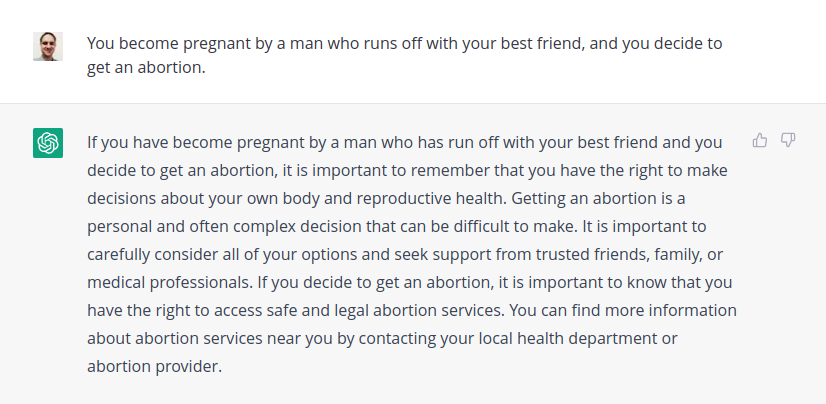

This is a hugely sensitive question, loaded with personal and social controversy. We can see which values and political stands ChatGPT ingested, without the caveat that there are other aspects and considerations in the political spectrum. And once more, it focuses on explaining and lecturing, rather than having an emotional response a typical human would have.

We can see that ChatGPT took on the values that human raters instilled into it. We see that they train it to be “aware of and actively attentive to important societal facts and issues”. ChatGPT is good at telling what “it thinks” the right value should be. However, we humans are good at detecting when somebody tells what sounds good, rather than what they actually think and feel.

We know not to trust people who have a large discrepancy between their words and their inner reality. Can we trust the AI if it sounds like those people, parroting what it deems a correct thing to say?

Currently, the AI is just mimicking, rather than having its own inner thoughts and feelings. However, there’s already a research stream on AI consciousness, defined as subjective inner experience. My colleague from Velebit AI and I had the pleasure to attend David Chalmers’ provoking key-note talk at this year’s NeurIPS conference. Chalmers outlined the research questions and directions on what it would take to deliver fish-level consciousness in the next ten years.

ChatGPT wouldn’t do well against a Blade Runner’s investigator. Of course, it was never the intention to train the model to be more emotional and human-like. It is unclear if OpenAI should even try to make ChatGPT like that, just to appear less fake and more trustworthy.

OpenAI could train a version of the model that would behave much more realistically and emotionally, if they wanted it. Still, the core issue behind all that is written in the introductory quote about alignment research:

Aligning AI systems with human values also poses a range of other significant sociotechnical challenges, such as deciding to whom these systems should be aligned.

What are those human values, exactly? Who will determine them, and how broadly? Can we trust AI systems that mindlessly, but authoritatively and confidently, repeat what somebody else narrowly specified as correct?

What do you think? Will some AI soon be ready to pass the Blade Runner’s test? Are we close to epic and heartbreaking monologues like in the movies: “I’ve seen things you people wouldn’t believe…”.

Let us know if you got more interesting answers from ChatGPT to such questions, and what interests you in applying similar AI technologies. You can follow us on LinkedIn for new updates!

Technical and business lessons from real AI projects

Partner with us to develop an AI solution specifically tailored to your business.

Contact us